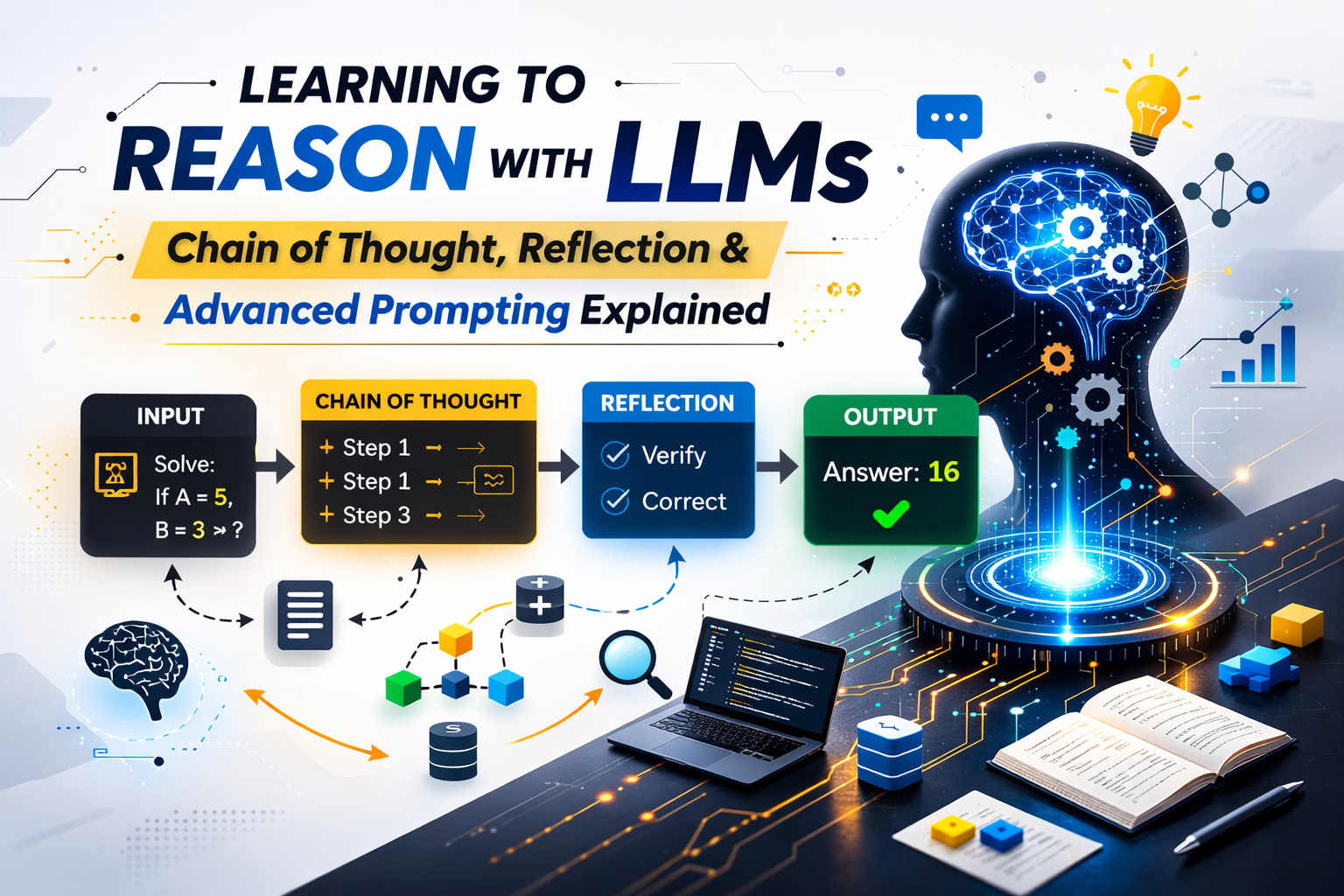

Learning to Reason with LLMs: Chain of Thought, Reflection, and Advanced Prompting Explained

Large Language Models don’t truly think — they predict. So how do we make them reason? In this deep dive, explore Chain of Thought, reflection prompting, and advanced reasoning techniques that turn LLMs into powerful problem solvers for real-world AI systems.

Artificial Intelligence is everywhere.

But not all AI is equal.

Some AI systems recognize faces.

Some recommend movies.

Some detect fraud.

And then there are Large Language Models (LLMs) — systems that can write essays, debug code, explain physics, and even simulate reasoning.

But here’s the real question:

Are LLMs actually thinking?

Or are they just extremely good at pretending?

This blog is about that gap.

We’re going to explore:

- What an LLM actually is

- Why LLMs feel “smarter” than traditional AI

- What reasoning means in the context of LLMs

- Chain of Thought prompting

- Reflection techniques

- Advanced prompting strategies

- Why LLMs still fail

- How developers can design better reasoning systems

Let’s begin at the foundation.

What Is an LLM?

LLM stands for Large Language Model.

It’s a type of AI trained on massive amounts of text data to predict the next word (technically, next token) in a sequence.

That’s it.

Yes — that simple.

Under the hood, models like:

- OpenAI

- Google DeepMind

- Anthropic

- Meta AI

train neural networks with billions (or trillions) of parameters.

These models don’t “understand” language the way humans do.

They learn statistical patterns.

Example:

If you give it:

“The capital of France is…”

It predicts:

“Paris”

That probability prediction — repeated billions of times during training — is what gives LLMs their power.

Why Are LLMs Better Than Traditional AI?

Let’s compare.

Traditional AI (Rule-Based or Narrow ML)

- Hard-coded rules

- Task-specific

- Limited flexibility

- Needs structured data

- Breaks outside defined boundaries

Example:

A traditional chatbot:

IF user says "hello"

THEN respond "Hi!"It doesn’t adapt.

LLM-Based AI

- Context-aware

- General-purpose

- Can write, code, summarize, reason

- Learns from massive unstructured data

- Can adapt to new tasks via prompting

You don’t retrain it.

You guide it.

That’s revolutionary.

LLMs are foundation models — they are not built for one task. They are adaptable.

That’s why they feel smarter.

But here’s the catch:

They don’t actually “think.”

Do LLMs Actually Reason?

This is where things get interesting.

When you ask:

If a train travels 60 km/h for 3 hours, how far does it go?

It gives:

180 km

It looks like reasoning.

But what’s happening internally?

It predicts tokens that statistically follow similar patterns in training data.

However…

Researchers discovered something surprising:

If you force LLMs to explain their reasoning step by step…

They perform better.

That discovery changed everything.

What Is Reasoning in LLMs?

Reasoning in LLMs refers to:

- Multi-step logical thinking

- Mathematical deduction

- Cause-effect analysis

- Structured problem solving

But because LLMs are probabilistic models, they need help structuring their thought process.

This is where Chain of Thought prompting comes in.

Chain of Thought Prompting

Chain of Thought (CoT) prompting forces the model to explain its reasoning step by step.

Instead of asking:

What is 27 × 14?

You ask:

What is 27 × 14? Let's solve it step by step.Now watch the difference.

Without Chain of Thought

27 × 14 = 368

(Might be wrong.)

With Chain of Thought

27 × 14

= 27 × (10 + 4)

= 270 + 108

= 378

More accurate.

Why?

Because generating intermediate steps reduces reasoning errors.

The model is more likely to stay logically consistent.

This technique became famous after research papers showed huge improvements in math and logic tasks.

Example: Real Reasoning Prompt

Bad Prompt:

A farmer has 3 fields with 10 cows each. If he sells 5 cows, how many remain?

Better Prompt:

A farmer has 3 fields with 10 cows each.

First calculate total cows.

Then subtract cows sold.

Explain step by step.

Output:

3 fields × 10 cows = 30 cows.

30 - 5 = 25 cows remaining.

Cleaner. Safer. More reliable.

That’s learning to reason with LLMs.

Reflection Prompting (Self-Correction)

Now we go deeper.

Chain of Thought helps generate reasoning.

Reflection helps validate reasoning.

Example:

Solve this math problem step by step.

After solving, review your answer and check for mistakes.

This triggers a second reasoning pass.

Many times, the model corrects itself.

This is similar to human behavior:

- Solve problem

- Re-check solution

- Catch mistake

That loop dramatically improves reliability.

Advanced Prompting Techniques

Let’s level up.

1. Self-Consistency Prompting

Instead of generating one answer, you generate multiple reasoning paths.

Then choose the most common final answer.

This reduces randomness.

2. Tree of Thoughts

Instead of one reasoning path…

The model explores multiple branches.

Think of it like chess.

Evaluate multiple moves.

Pick best path.

This improves complex problem solving.

3. Structured Output Prompting

Instead of free text:

Return answer as JSON:

{

"steps": [],

"final_answer": ""

}

This reduces hallucination.

For developers (like you building AI systems), this is powerful.

Why LLMs Still Fail

Even with reasoning prompts, LLMs:

- Hallucinate facts

- Overconfidently lie

- Make arithmetic mistakes

- Struggle with deep symbolic logic

Why?

Because they predict probabilities — not truth.

There’s no built-in fact checker unless you connect one.

That’s why modern AI systems combine:

- LLM

- Search

- Calculator

- Database

- Code execution

This is called tool-augmented reasoning.

Example: LLM + Calculator Tool

Instead of trusting math reasoning:

- LLM parses equation

- Sends it to calculator

- Returns verified result

Now you reduce hallucination.

This is how serious AI systems are built.

From Prompt Engineering to Reasoning Engineering

Old mindset:

“How do I write a better prompt?”

New mindset:

“How do I design a reasoning system?”

You don’t just prompt once.

You design:

- Input structuring

- Step generation

- Validation

- Reflection

- Tool execution

- Output formatting

That’s the future.

Why Learning to Reason with LLMs Matters

If you:

- Build AI apps

- Create AI startups

- Develop AI agents

- Integrate LLMs in backend systems

You must understand reasoning techniques.

Because raw prompting is not enough anymore.

The real power comes from:

- Structured reasoning

- Controlled outputs

- Multi-step logic

- Validation loops

Final Thoughts

LLMs don’t think.

But they can simulate thinking.

And when guided correctly, that simulation becomes powerful.

Learning to reason with LLMs means:

- Understanding their limits

- Structuring their thought process

- Adding reflection

- Using tools

- Designing workflows

The future of AI isn’t just bigger models.

It’s better reasoning.

And the developers who understand this shift will build the next generation of intelligent systems.